What is Dynamic Programming?

Introduction

Dynamic Programming is a programming technique of breaking tasks into smaller tasks and storing them to avoid repeating them. In a previous post, we discussed recursion as a useful tool for solving programming problems. Dynamic programming can be seen as an improvement or optimization of plain recursion. Instead of the brute force approach of recursion, we can optimize our code using this time-saving storage-based technique.

What's wrong with Recursion?

As you become more proficient as a programmer, you start to realize that there is more than just getting the task done: expert programmers focus on getting the task done in the most efficient way possible. This is why the study of algorithms and their efficiency is so important to writing great software. Consider again our recursive Fibonacci solution from the previous recursion blog post.

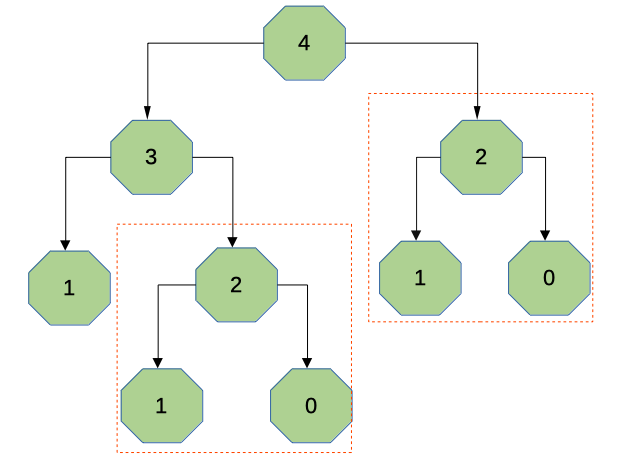

Let's look at a diagram of calculating the fourth number in the Fibonacci sequence:

We start at 4 and break it down to calculating 3 and 2. This is further broken down to 2 and 1, etc. However, in calculating the number this way, we end up with unnecessary repetition: see the red boxes around the calculation of 2. The same operation is performed twice.

Although this is a trivial example for illustration purposes, you can see how quickly this becomes a problem for larger numbers. Instead of calculating twice, wouldn't it be better to store the calculation once and look it up rather than repeating it unnecessarily? In mathematical terms, our brute force recursive solution ends up with exponential time complexity. Surely there is a better way?

Dynamic Programming to the Rescue!

Dynamic programming improves on the above by using the principle of Optimality. This is the theory that if all steps of a process are optimized than the entire result is optmized. The dynamic programming methodology is to look at all the sub-tasks in a solution, looking for repeated tasks and storing them in a look-up table of some kind.

Think about the pirate who needs to bury treasure regularly. If the pirate only stores the steps for finding the treasure as a sequence of steps, then every time they go to add to their chest they have to go through the sequence all over again. If instead, the pirate has a map with an "X" on it, there is no need for repetition.

Let's revisit our previous solution to calculating the Fibonacci sequence:

# Brute force fibonacci solution:

def fibonacci_sum(n):

# Special cases to stop function when necessary

if n == 0:

return 0

elif n == 1:

return 1

# Otherwise sum the previous two numbers

else:

return fibonacci_sum(n-1) + fibonacci_sum(n-2)

Now ask yourself how you might use dynamic programming to improve the efficiency of this code? Hint: What could function as a treasure map here? How could we store results to avoid having to repeat their calculation unnecessarily?

Try it out before looking at the solution below.

# Dynamic programming approach

look_up = {}

def fibo_sum_dynamic(n):

# Special cases to stop function when necessary

if n == 0:

return 0

elif n == 1:

return 1

elif n in look_up: # Check if we have the answer already

return look_up[n]

else:

# Store the calculation for later

look_up[n] = fibo_sum_dynamic(n-1) + fibo_sum_dynamic(n-2)

return look_up[n]

print(fibo_sum_dynamic(6))

Conclusion

The new Fibonacci calculation uses a python dictionary (or hash) as a look-up table to check if any sum has already been calculated to avoid repeating potentially time-consuming operations. This dynamic programming approach smoothes out execution time to nearly linear complexity which is a much more efficient result than before. Note that this is an improvement in terms of speed, the addition of a dictionary for look-up does increase the overall memory footprint of the program. Always keep these trade-offs in mind when using the dynamic programming approach.